Consider a market researcher who spends hours manually gathering pricing information from various eCommerce websites, analyzing this data, and building competitive pricing strategies based on the derived insights. Instead of relying on traditional scraping tools and practices, he could have saved ample time with the use of automated tools. This would also let him focus on other core tasks like analyzing market trends, identifying customer preferences, and refining pricing strategies to stay competitive.

In essence, automation offers numerous advantages for businesses, saving time and resources while enhancing accuracy and consistency in data collection and analysis. The scenario mentioned above was an example of just one obstacle that businesses often face during web data extraction. Below, we will explore a few other major challenges that firms encounter in web scraping and how to overcome them using automated solutions.

Common Web Scraping Challenges and How to Address them with Automation

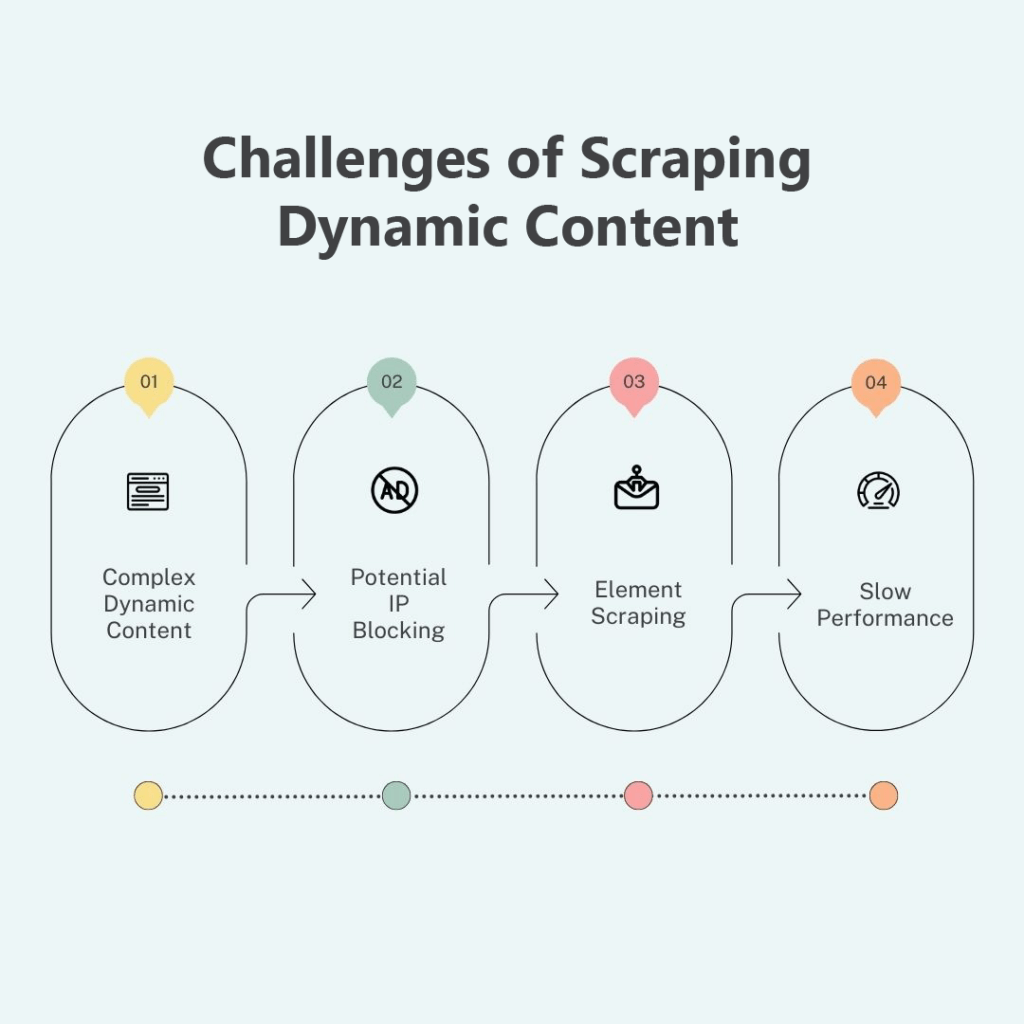

- Scraping Dynamic Content from Websites

Many websites today utilize JavaScript to create dynamic content that is more interactive and engaging. Unlike static content, which remains fixed on the page (like a simple article text), dynamic content is generated and updated in real time. The challenge with extracting dynamic content arises because traditional web scraping methods typically involve scraping the HTML content of a webpage and parsing it. However, dynamic content is generated by JavaScript code running in the browser after the initial HTML has been loaded. So, if you simply fetch the HTML source of a web page with dynamic content, you won’t capture the real-time generated elements. This hurdle is particularly faced by industries such as finance, where real-time data is crucial.

For example, a financial institution might need to scrape stock prices from various sources to analyze market trends in real-time. Without the ability to capture dynamic content, they would miss out on real-time fluctuations and potentially make uninformed decisions.

Solution:

Automation tools like headless browsers (browsers running in the background without a graphical interface) can render JavaScript and access the complete content of the page, simplifying dynamic website scraping needs.

- Dealing with Evolving Website Structures

Websites often undergo frequent updates to improve user experience or incorporate new features. These changes can break scraping scripts that rely on specific HTML structures. In industries like travel, where websites frequently update their layouts to showcase new offerings or improve navigation, this presents a significant challenge.

For example, a travel agency might struggle to scrape hotel listings or flight details if the website structure changes frequently.

Solution:

Automation frameworks offer functionalities to handle evolving website structures. By employing techniques like XPath or CSS selectors, scraping scripts can target specific elements on a webpage, making them more adaptable to structural changes.

- Bypassing Anti-Scraping Measures

To protect their data, websites often implement anti-scraping techniques and measures such as CAPTCHAs or IP blocking. These help businesses protect their websites from data theft, spam, and other malicious activities. However, when these measures are deployed, they can hinder web scraping efforts, particularly for industries like eCommerce, where businesses rely on competitor analysis and market research to stay competitive.

For instance, an eCommerce seller might need to scrape product information from competitor websites to identify trending products.

Solution:

Automation tools can leverage techniques like IP rotation or proxy servers to bypass these measures. They can mimic human browsing behavior, rotate IP addresses, or perform CAPTCHA solving for scraping, ultimately helping businesses evade detection and continue to scrape data without interruptions.

- Ensuring Scalability during Web Scraping

Another common challenge in web scraping, especially when dealing with large volumes of data or frequent updates, is scalability. Traditional web scraping methods rely on manual scripting or simple libraries to fetch and parse HTML content from web pages. While these approaches may suffice for small-scale scraping tasks, they quickly become impractical when scalability is required. As the volume of data increases or the frequency of updates grows, traditional tools struggle to keep up. Manual scripts may fail to handle the huge volume of data, leading to performance issues, incomplete scrapes, or even website bans due to excessive requests.

For example, an eCommerce company may want to scrape product information from numerous online retailers to monitor pricing trends and competitor activity. As the number of products and retailers grows, traditional scraping methods struggle to keep pace, resulting in incomplete data retrieval and outdated insights, hampering the company’s competitive edge.

Solution:

Automation tools offer a scalable solution to these challenges without the need for switching between tools. They often employ distributed computing and cloud infrastructure, enabling them to scale resources dynamically based on demand. This ensures reliable performance and high throughput, even when dealing with massive datasets or frequent updates.

- Abiding with Ethical and Legal Considerations

Respecting ethical and legal considerations is essential when conducting web scraping activities. Businesses must parse and analyze the contents of a website’s robots.txt file to understand the website’s crawling rules and scraping guidelines and avoid overloading servers with excessive requests. This is important for industries across the board, as violating ethical or legal guidelines can damage reputations and result in legal consequences.

Solution:

Automation tools can be programmed to adhere to robots.txt directives and implement limiting mechanisms to regulate the frequency of scraping requests. By respecting scraping guidelines and controlling request rates, businesses can engage in responsible data collection practices while avoiding potential legal and ethical pitfalls. This ensures that industries relying on web scraping can gather information ethically and maintain positive relationships with website owners and users.

Automate Web Scraping with Expert Assistance

Developing and maintaining robust scripts to automate data extraction demands expertise in programming languages such as Python, SQL, & Scala and familiarity with data extraction tools and APIs. This poses a barrier for in-house teams lacking such specific technical skills. Additionally, allocating dedicated resources for script and API development can divert attention from core business objectives. Hiring dedicated people for this task can strain budgets. This is where opting for web data extraction services can help!

External service providers leverage customized scripts developed by their teams to automate web scraping. They are proficient not only in automating web scraping but also in managing the entire data extraction process for you. They can collect data (files, text, images, etc.) from various online sources. Additionally, they offer data management services, alleviating the burden of cleaning and standardizing the scraped data. So you receive analysis-ready data without any extra hassle.

To Conclude

Navigating the landscape of web scraping presents businesses with a lot of challenges, from dealing with dynamic website structures to bypassing anti-scraping measures and ensuring data quality. However, by embracing automation, these hurdles can be effectively overcome. Looking ahead, the role of automation in web scraping is only poised to expand. As technology advances and data becomes increasingly pivotal in decision-making, businesses that harness the power of automation will not only save time and resources but also stay ahead of the competition.

.svg)